Subwarp Interleaving

Subwarp Interleaving allows for fine-grained interleaved execution of diverged paths within a warp with the goal of increasing hardware utilization and reducing warp latency in applications with high thread divergence, low warp occupancy, and long stalls in the pipeline.

GPU Subwarp Interleaving, HPCA 2022

Video

S. Damani, M. Stephenson, R. Rangan, D. R. Johnson, R. Kulkarni and S. W. Keckler

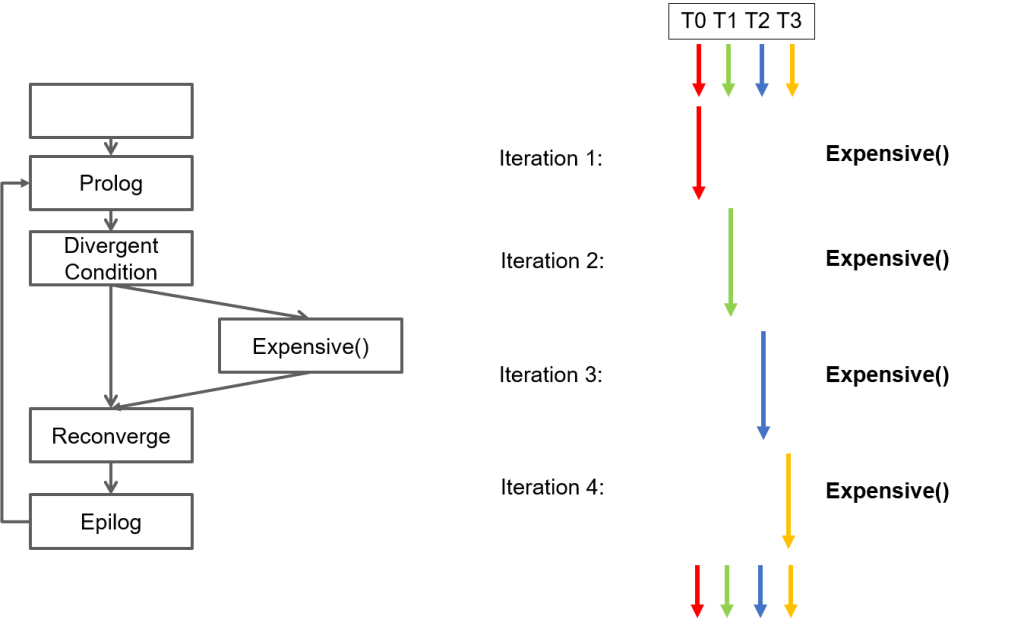

Speculative Reconvergence

Speculative Reconvergence identifies serially executed common code blocks within divergent loops and modifies the compiler’s branch convergence mechanism to reconverge threads early, thereby maximizing parallelism within expensive code blocks at the cost of increased serialization along low-cost paths within a GPU program.

Speculative Reconvergence for Improved SIMT Efficiency, CGO 2020

Slides

S. Damani, D. R. Johnson, M. Stephenson, S. W. Keckler, E. Yan, M. McKeown, and O. Giroux

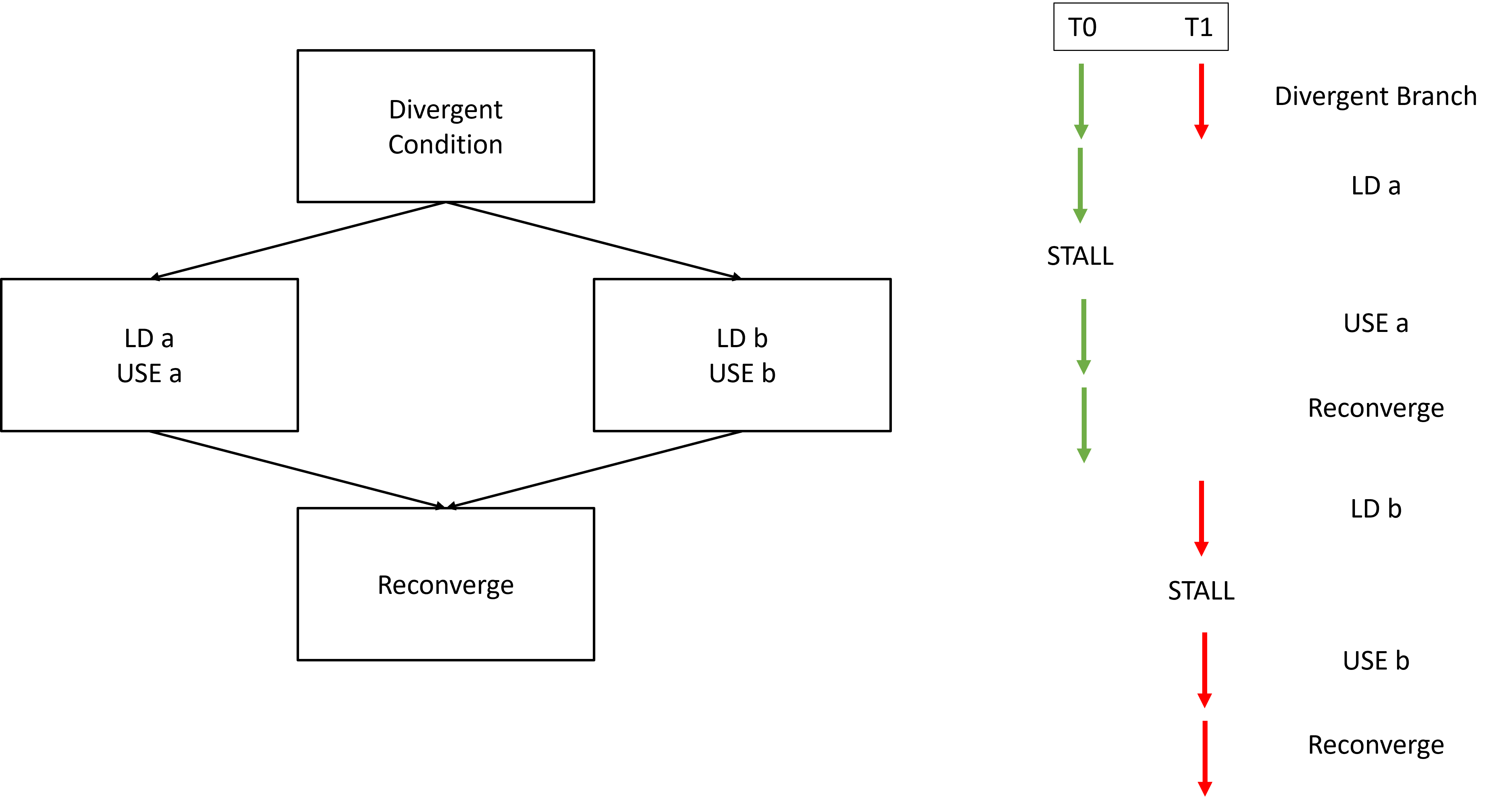

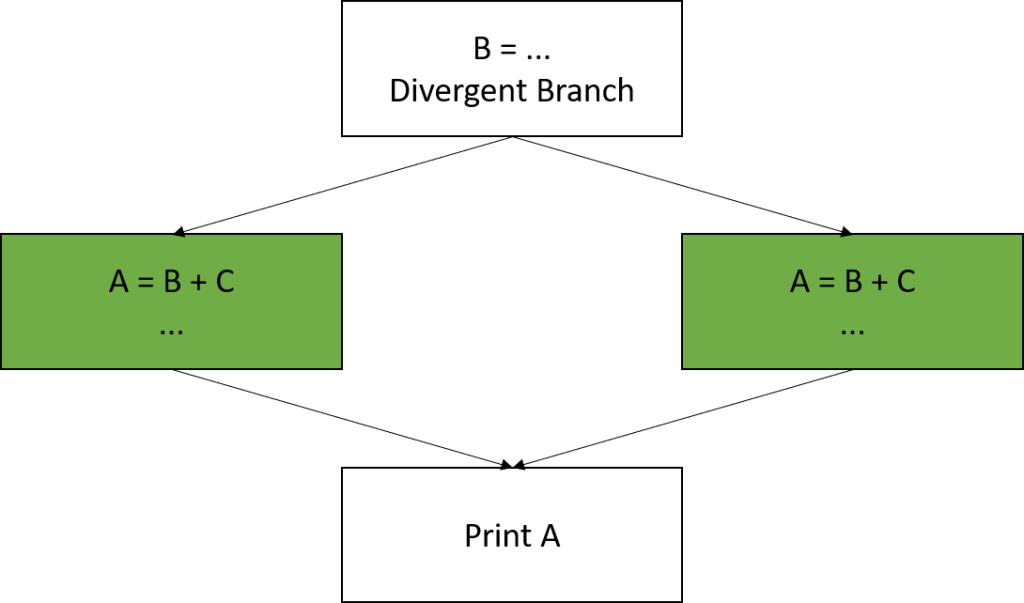

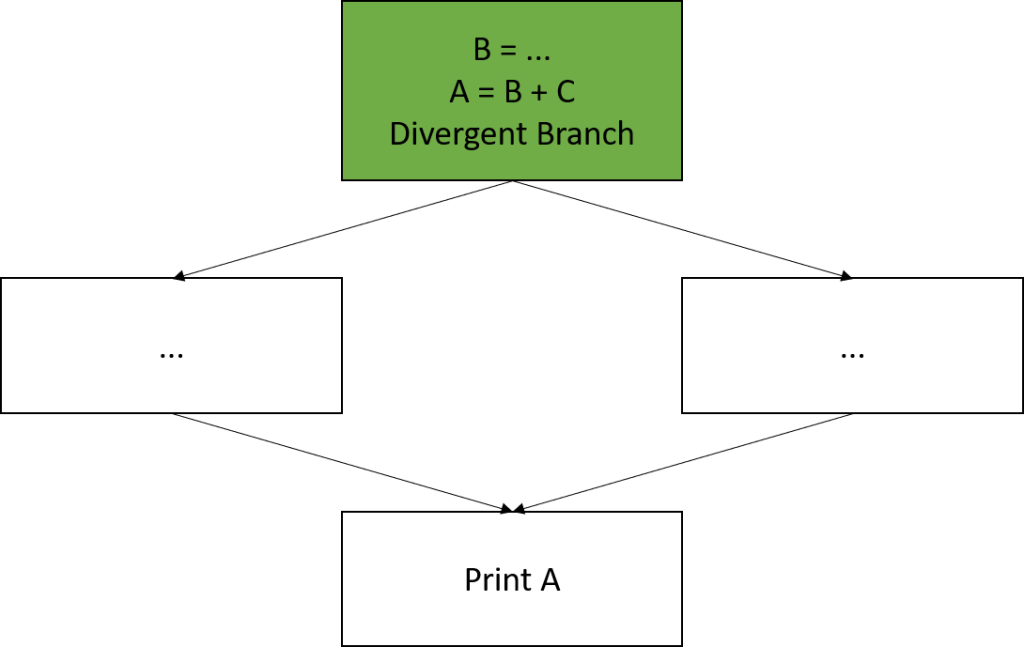

Common Subexpression Convergence

Common Subexpression Convergence (CSC) is a compiler optimization that identifies common code along divergent paths and moves them to a convergent region thereby increasing SIMT efficiency of a GPU program.

Common Subexpression Convergence, LCPC 2019

Slides

S. Damani and V. Sarkar

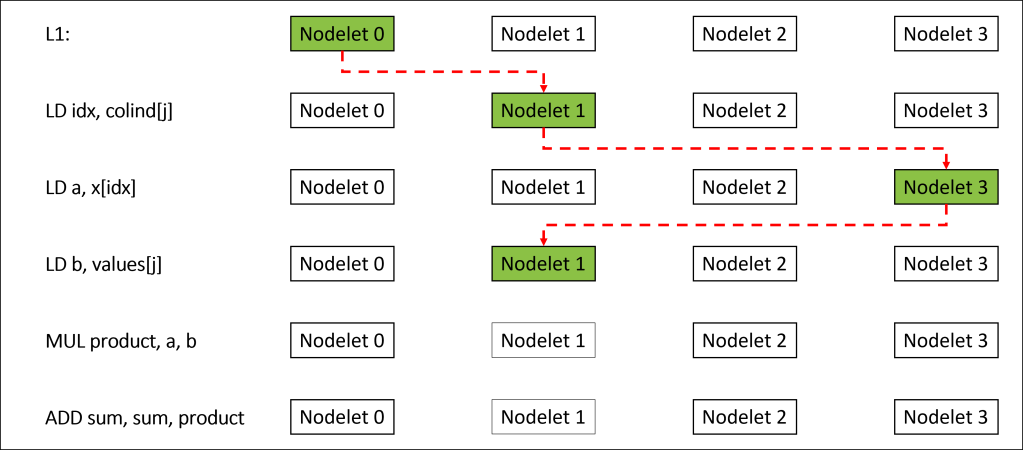

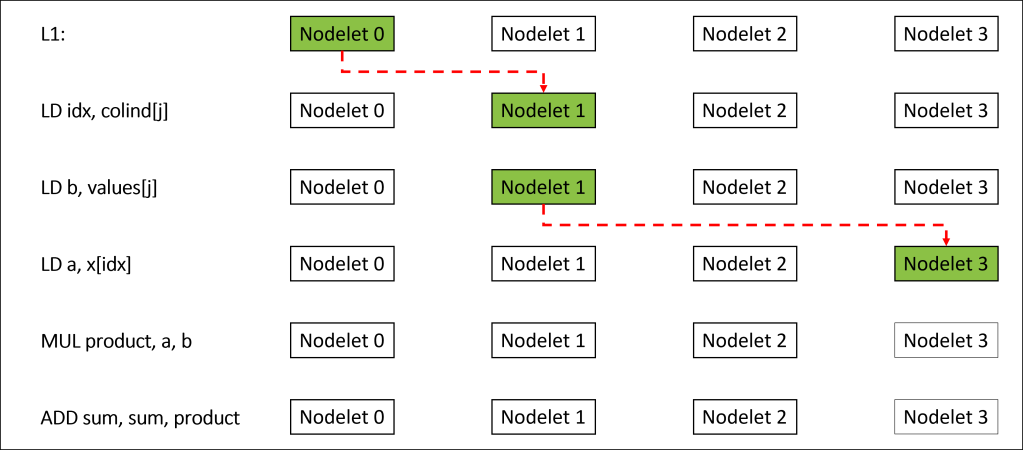

Memory Access Scheduling

Memory Access Scheduling is an instruction scheduling technique that identifies co-located memory accesses and groups them together to minimize the number of thread migrations on the EMU architecture.

Memory Access Scheduling to Reduce Thread Migrations, CC 2022

Slides

S. Damani, P. Barua, V. Sarkar